Data Mining for Humanists

Big Data is watching you, and Lev Manovich thinks that’s just fine.

By Dave MandlMarch 8, 2021

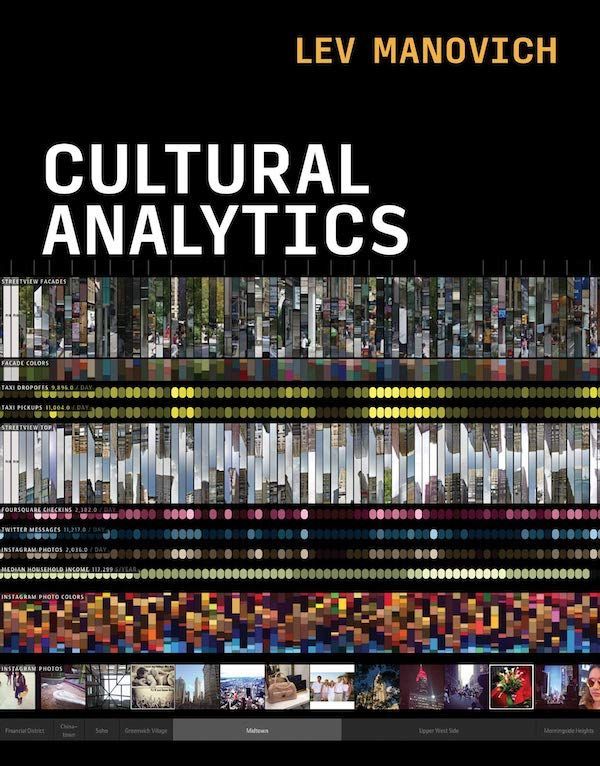

Cultural Analytics by Lev Manovich. The MIT Press. 336 pages.

IN THE LAST 20 YEARS or so, several factors have combined to make it possible to gather and analyze vast amounts of digital information, far larger than any datasets that could be processed previously. The ever-increasing speed of computer networks and the plummeting cost of storage make data collection on a colossal scale much easier, and new “Big Data”–specific technologies and algorithms enable us to digest, filter, and crunch this mountain of information with little effort. At the same time, with the spread of internet use to more or less everyone, and an increasing number of activities conducted online — shopping, chatting, watching videos, creating and sharing cultural artifacts — the data and contextual “metadata” from all these activities are being made available (either voluntarily or unwittingly) to a slew of commercial and marketing enterprises and academic and research institutions.

Working on the assumption that this particular glass is half full (an arguably flawed assumption, but we’ll put that aside for the moment), Lev Manovich, in his new book Cultural Analytics, focuses on the positive side of Big Data, specifically how the new techniques and technologies can be used to advance our knowledge of culture, or even reshape culture for the better. The lab Manovich runs at UC San Diego aims to use methods from computer science, data visualization, and media art to analyze contemporary media and users’ interactions with it. He also hopes to change how we view culture, both figuratively and literally, in ways that are hard to predict and will continue to take shape as we continue to corral the data digitally. “The scale of culture in the twenty-first century,” Manovich writes, “makes it impossible to see it with existing methods.” Which raises the question, “How can we see (for example) one billion images?” We all know, more or less, how to look at and assess a single painting, but how do we “look at” a billion of them — a kind of exercise that is completely new to the human race? And what will be revealed when we do? What can we hope to find out?

The territory covered by Cultural Analytics is huge, but Manovich has two central goals: (1) to describe the methods being used to gather, organize, categorize, and tag the flood of cultural data that has suddenly become available to us, and (2) to explore ways to improve these processes, by addressing biases and other flaws built into the old ways of looking at culture. With the production of culture now more “democratized” than ever — billions of photographs and videos are uploaded to the internet every day, by people of all races and classes — the universe of images now available to us represents much more than a small group of relatively privileged artists. And using modern image-analysis techniques, we can now programmatically capture details like the kinds of poses adopted by the subjects in people’s photos, what types of clothing they’re wearing, and so on. We can also study everything from changes in the colors that artists have used in their paintings over time to what galleries those paintings are shown in as their careers progress, using publicly available data. Among other examples Manovich gives from the work he’s done are color screenshots of a few massive surveys containing “graphs” of paintings and magazine covers organized by such variables as hue and brightness; these reveal trends and groupings that would have been almost impossible to visualize 50 years ago.

As a textbook for those learning the ropes of cataloging and analyzing cultural artifacts, Cultural Analytics seems indispensable. The book covers every kind of decision involved in these processes, in as much detail as you could possibly ask for — including, among other things, a long digression on the use and misuse of statistics. It also includes an in-depth treatment of one of the most fraught and difficult problems of assembling cultural data for analysis: “sampling.” The old saw warning us that “the map is not the territory” means, in this context, that any representation of a cultural artifact that is not the artifact itself is necessarily incomplete and is almost guaranteed to have subjective judgments embedded in it. So if, for example, you’re building a database of information about songs, what are the important features you want to capture about each of them? The key signature? The instruments used? The number of instruments? Whether the drummer used drumsticks or brushes? The ages of the musicians? It’s nearly impossible to know what information about a song might be considered significant to us at some time in the future, something you have to take into account when designing and assembling your data sample. Manovich discusses this process at length. In one example he cites, he compared the images in manga publications designed for male and female readers and found consistent disparities in brightness, demonstrating that visual style in this medium is used to construct gender differences. This kind of study wouldn’t be doable without modern image-analysis tools and with only such basic “features” as story lines captured.

A whole class of data that barely existed before this century but is now available in abundance is behavioral information: everything from “likes” to web searches to Alexa requests to the length of time spent on particular web pages. Clearly, all this data, especially when collected in quantities that reveal patterns across large populations, can tell us a lot about general trends and preferences in cultural consumption — for example, helping designers tailor products to users’ preferences and usage habits. Manovich touts this as almost exclusively a good state of affairs, but it’s also where things start to get murky. Harvesting mouse clicks is not remotely in the same category as, say, some mad-scientist experiments I can think of, but it does raise a similar question: even if gathering certain information helps further human knowledge, can we ignore the harm it will likely cause as a by-product?

In the case of digital data, the harm is obviously not life-threatening, but what about the implications for privacy? For-profit corporations (the main parties gathering user/consumer data, although they also make much of it available to academic researchers) don’t have the best track record in this area. Some of them have promised not to harvest or retain certain kinds of user data and have subsequently broken those promises. (Knowing that they’ve been punished with multimillion-dollar fines in some cases isn’t much consolation.) Some have used interactive dolls to effectively subject young children to surveillance. And, to return to an earlier theme, even if data is being gathered for the most benign of reasons today, who’s to say how it might be used 20 years from now, with Eric Trump in the White House? Once there’s a database of everything you’ve clicked on, every message you’ve sent, every embarrassing video you’ve made with friends since you were three years old — in 2021, this is a cold fact rather than a paranoid fantasy — it will be impossible to put that genie back in the bottle.

To my mind, Manovich goes a little too easy on the entities gathering most of this data, addressing the problematic side of the phenomenon only in brief, polite asides. He also makes what feels like a tenuous analogy between contemporary behavior-monitoring technologies and their far less invasive forerunners. “The systematic observation and capture of people’s behavior and interactions for further analysis is nothing new for ethnography, anthropology, urban studies, sport, medicine, and other fields,” he writes, citing examples from the 1860s and 1910s that are drastically different in quality and quantity from what Google, Netflix, and Amazon are doing today. Beyond that, while the cultural-analysis goals Manovich touts seem generally justifiable or even exciting, is the harvesting of every kind of data imaginable for the purposes of improving some media company’s website or advertising really worth it? Manovich argues that the creation of Facebook’s “like” button was genuinely well intentioned, meant to improve the user experience and not just for the purpose of collecting data. I’ll take his word for that, but I wouldn’t bet that a fiendish lightbulb didn’t eventually go off in Mark Zuckerberg’s head after he had amassed trillions of user likes. We only recently learned that police body cams, ostensibly put into service for citizens’ protection, are now being used against us, as instruments of facial recognition. Ceding power more or less permanently on the assumption that it will never be turned against us has never worked out well.

It’s a fact, however, that many of the spying technologies used against us don’t work perfectly, and possibly never will — I’m thinking, for example, of how search engines are regularly gamed using “search engine optimization,” to the eternal chagrin of Google, or how many AI-generated book, music, and film recommendations still miss the mark. Political polls seem to get less accurate with every election. And, as Manovich points out, capturing our most intimate information accurately in the first place is not a cakewalk: “[O]ur technological measurements and data representations of human emotional and cognitive states, memories, imagination, or creativity are less precise and detailed than our measurements of artifacts.” So, there could be a faint glimmer of hope for the Luddites among us surveillees. Or not: People forget that software, being built by humans, often has those humans’ biases embedded in it, like the AI engines that have been found to be skewed toward people with white skin. These could prove to be even worse than the “analog” biases of the past, since much of the machinery of our world is built on the assumption that computers never make mistakes.

But I have strayed from the subject. Cultural Analytics is meant to be a textbook on how Big Data can be organized and analyzed to help us get a handle on culture, and to improve the ways we use and understand culture, and on that score it’s hard to imagine how the book could be more complete. Working with Big Data of the kind Manovich is talking about entails at least a cursory knowledge of statistics, database organization, maybe information theory, and of course cultural analysis, and he covers all those topics and more. The book seems to be a shoo-in to become the bible on the subject. But do I wish Manovich had sprinkled more skepticism throughout, suppressed his almost unmitigated gee-whiz-ism just a bit, and used his pulpit to warn us about the very real dark side of the digital panopticon he describes? Yes, I do.

¤

LARB Contributor

Dave Mandl’s writing has appeared in The Wire, The Believer, The Register, The Comics Journal, The Rumpus, Volume 1 Brooklyn, and other publications. He was the longtime music editor at The Brooklyn Rail and an editor at Semiotext(e)/Autonomedia. He hosts the radio show World of Echo at WFMU and plays the bass guitar in various groups.

LARB Staff Recommendations

The Violence of the Algorithm

Brad Evans speaks with Davide Panagia, author of “Ten Theses for an Aesthetics of Politics.” A conversation in Brad Evans’s Histories of Violence...

The Last of the Unicorns: Facebook and the Fight for a Better Future

Siva Vaidhyanathan's "Antisocial Media" paints a bleak picture of Facebook's impact, but are its policy prescriptions enough?

Did you know LARB is a reader-supported nonprofit?

LARB publishes daily without a paywall as part of our mission to make rigorous, incisive, and engaging writing on every aspect of literature, culture, and the arts freely accessible to the public. Help us continue this work with your tax-deductible donation today!