Weird Science

From anti-vaxxers to Flat Earthers, the public’s (and scholars’) perception of science shifted sometime between 1990-2010, writes Michael Gordin.

By Michael D. GordinMarch 14, 2022

ABOUT 20 YEARS AGO, shortly after I had finished graduate school in the history of science, a friend was entering the field at a different university. She had done impressive research as an undergraduate in one of the byways of not-so-respectable science — spoon bending, phrenology, UFOs — and was considering whether to continue in the same vein. Her new advisor cautioned, “If you work on fringy things, people might begin to think you are fringy” (or something to that effect; I wasn’t there). To be clear, he was being entirely supportive. He had (still has) an impressive command of the field, and he was not wrong. Aside from a brief flourishing in a few pockets of the sociology of science, principally in the United Kingdom in the 1970s, “disreputable” topics did not promise a path to glory.

More than two decades into the 21st century, that exchange would go quite differently. Bookshelves are buckling under the weight of history books about the oddities of science. In 2010, historians of science Naomi Oreskes and Erik M. Conway published Merchants of Doubt (Bloomsbury), which traced the careers of a small group of scientists who were instrumental in spreading theories that denied tobacco smoke’s negative impact on human health, or acid rain’s on the environment, or anthropogenic climate change’s on absolutely everything. (A movie adaptation has since appeared.) The following year, Norton released MIT historian David Kaiser’s How the Hippies Saved Physics, which followed countercultural physics graduate students in the 1970s who explored, among other quantum questions, the theory of faster-than-light travel and the practice of hallucinogenic drugs. In 2012, yet another historian of science, W. Patrick McCray, published The Visioneers (Princeton University Press), a detailed analysis of space colony and nanotechnology enthusiasts (and the occasional pornographer) in the ’70s and ’80s, after which nanotechnology alone escaped the badlands and was embraced as a staple of materials science. These are not fringe scholars nor are their books marginal: each was awarded the Watson Davis and Helen Miles Davis Prize of the History of Science Society, for “books in the history of science directed to a wide public,” in 2011, 2013, and 2014 respectively.

This surge of attention to the fringes of science has not just happened within the boutique field of the history of science. If you have been following science communication for the past 15 years or so, you will have come across countless discussions of what might be considered “pathologies” in science. Salient among these is the “replication crisis,” principally in psychology and biomedicine, which has rather shockingly shown that many of the canonical findings in, say, cognitive psychology and cancer genetics are unreplicable by other scientists. [1] Since replicability is frequently held up as one of the most significant indications that science generates reliable knowledge, the pervasiveness of such failings is truly worrisome.

Meanwhile, popular and scientific periodicals alike have been bursting with incidents of scientific fraud or misconduct (in some cases alleged, in others confirmed): Jan-Hendrik Schön’s organic semiconductors at Bell Labs (2002), Victor Ninov’s reported discovery of elements 116 and 118 at Lawrence Berkeley Lab (2002), Hwang Woo-Suk’s claim to have cloned human embryonic stem cells (2005), Marc Hauser’s evolutionary psychology announcing advanced cognition in rather unprepossessing cotton-top tamarins (2010), among others. [2] Alongside these egregious forms of academic misconduct, there have been a host of others, such as plagiarism, gaming the metrics that get scientists promoted through fraudulent citations and affiliations, and laundering cooked industry numbers through ghostwriting. [3]

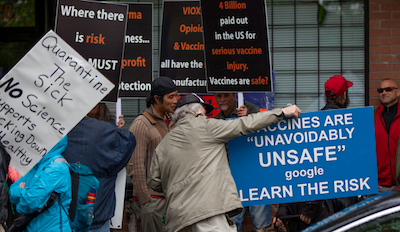

Scientists across the board have expressed righteous consternation about these matters, but they are not the only ones grousing about contemporary science. The raging pandemic has raised the volume: mountains of criticism are now being lobbed or hurled at the Centers for Disease Control and Prevention (it’s too slow, too fast, too politicized, too politicized in the wrong direction) and at misinformation/resistance/hesitancy/denial about vaccines, masks, and tests. Whatever the official guidance, you will find those who “do their own research” attacking it. (Some of these voices come from fully credentialed scientists who do scientific research for a living.) This might all seem new, and it is — just not as new as you might imagine.

¤

Take vaccine hesitancy, for instance. Since the 1990s, increasing numbers of parents have seceded their children from once-mandatory school vaccination requirements, taking advantage of the religious or even “philosophical” objections to vaccination permitted by many states. [4] There are consequences: the last decade has brought periodic outbreaks of measles, sometimes severe ones. (COVID-19 is very bad; epidemics of measles would be worse.) For most of American history, however, vaccination was a relatively common public health measure. George Washington, for example, made the then-unpopular decision to inoculate his forces against smallpox during the Revolutionary War with very positive results.

Likewise, fringe movements in the sciences have long been a part of American society, although, to be sure, there has been a decided uptick over the past few decades. Consider the Flat Earth theory. Despite what you may have heard, Christopher Columbus did not discover that Earth was round; this fact was known since antiquity. (Plato wrote about it.) The notion that Columbus, one of the few knowledgeable mariners who did not quite believe it was a sphere (he thought it was egg-shaped), “discovered” its shape was a creation of the writer Washington Irving, who sought to assign some scientific legitimacy to what was otherwise a colonialist land grab. [5] Yet in the past decade, Flat Earth theory has achieved a certain currency, fueled by YouTube videos and an appetite for contrarian thinking, hyperskepticism, and a yearning for community. [6]

The trend is new, but not so new. To sharpen the point: it is not a creation of “the Internet” (which went online in October 1969) or even more specifically “the Web” (online in 1993), which is what most people mean when they refer to our current deluge of information. There is no question that listservs, blogs, and social media — and, before them, electronic bulletin boards and Usenet — have made fringe movements more visible, whether scientific or not. It’s easier for individuals to transcend geographical constraints and communicate with like-minded peers. (Though a lot of the fringe-science community is not particularly like-minded, disagreeing, often volubly, about alternative theories of gravity or the ether.) Much fringe science is theoretical in nature and/or builds on published literature, often quite dated, so the barriers to entry are low. For centuries, scientists have reported debunking various “cranks” who have written to them about this or that new theory. [7] It may seem like there is more fringe thinking now because it is unfolding on your laptop screen, whereas previously it was easy to overlook the scribbled notebooks in your cousin’s neighbor’s basement that were thrown out when he passed away. Even so, I became interested in these alternative sciences in middle school in the 1980s, well before the Web. I was devouring whatever “science” I could get my hands on, and was especially keen on stuff my teachers thought was nonsense. I found plenty of leads in the public library.

The winter of discontent regarding science doesn’t necessarily mean that we are entering a new Dark Ages. (Not that we ever had a first Dark Ages, as medieval historians continually remind us, but that’s another matter.) [8] Though what is going on is a matter of concern, it is hard to say how dire it is. As loud as the “anti-science” or “just asking questions” crowd gets, public confidence in science remains high; the catch concerns what gets to count as “science.” Total numbers of radical skeptics probably aren’t that large, but then again there aren’t that many scientists either when you zoom out to a societal scale.

People still seem quite happy with their bypass operations, semiconductors, and aerodynamics. Those are among the (many) areas of science where nothing is amiss, so we are not talking about them. But in other areas, things are seemingly amiss, and we cannot seem to shut up. What has changed is not that these fringes exist, but that we are now paying attention to them. Why is that?

My friend’s advisor gave her sensible counsel. I know because I got the same advice. (I partially ignored it, which wasn’t always easy.) Then, sometime between 1990 and 2010 — approximately — something shifted in the perception of science, both in academia and among the public. The general tenor of how science was understood altered, almost like a photographic negative. That shift is important, because we cannot understand the anxieties of the present without seeing how they stemmed from the anxieties of the past.

¤

It used to be that historians of science looked for their origin stories in what is popularly known as “The Scientific Revolution” of the 16th and 17th centuries. The concept has undergone some pummeling of late, along the lines of Voltaire’s quip about the Holy Roman Empire: there was not just one single thing happening, it wasn’t at all clear that it was “scientific” in a straightforward sense, and “revolution” is probably the wrong way to talk about it. These days, it seems that to understand the science of the present, the go-to explanation is the Cold War. Not every phenomenon in contemporary science stems from the expansion of the power and prominence of science in the Cold War, but this one probably does.

These roots, in turn, extend back to interwar Europe, especially the residues of the Austro-Hungarian Empire. These were heady places to think about science. A small group of philosophers, many of them trained in physics and mathematics, gathered in a group now known as the “Vienna Circle,” espousing a philosophy of science that came to be called logical empiricism or logical positivism. They were enormously impressed with Albert Einstein’s theory of relativity both for its sharp logical critique of fundamental concepts (space, time, simultaneity) and its striking empirical confirmations. Science was reliable, they posited, because it represented a combination of thorough-going empiricism and rigorous logic. Fuzzy questions that strayed too far from either — for example, a lot of contemporary psychology — were simply not (yet) scientific notions. [9]

The key axiom undergirding this philosophy is that if we want to understand how rationality works and how to get better at it, we can do no better than study science. Science was our best example of rationality in action. True, it was fallible, given that it was practiced by humans, but it tended to correct its errors and make progress, unlike so many other domains of human activity. Crucially, the logical empiricists were interested in science when it was working the way it was supposed to. Sure, there were foibles, frauds, and fiascos, but following those detours would only distract from the real quarry: rationality.

Even critics of this group, such as Karl Popper and Imre Lakatos (the former from Vienna, the latter from Debrecen, Hungary), emerged from this milieu. When the most famous anti-positivist of all, Thomas Kuhn, wrote his landmark The Structure of Scientific Revolutions in Berkeley in the early 1960s, he dismantled many of the propositions the Vienna Circle took for granted. Science simply did not behave according to either naked empiricism (values were always creeping in) or logical analysis (people did not simply change their minds when confronted with disconfirmation). But nonetheless Kuhn was interested in how science operated normally.

For all the excitement among his contemporary readers about the notion of revolution — it was the ’60s, after all — Kuhn’s central concept of “paradigms,” now so ubiquitous, was important because it guided “normal science.” The term says it all: science, behaving as it was supposed to, was an activity centered around puzzle-solving. That’s how it made progress (measured with respect to the dominating paradigm). Kuhn wasn’t interested in the fringe. (His fellow Berkeleyite, Paul Feyerabend — originally from Vienna, as it happened — was, but he was a bit fringy himself.)

The intellectual milieu in the United States for the first few decades of the Cold War shared this fixation on science’s normality. The regnant sociologist of science, Robert K. Merton of Columbia University, formulated his theory of “norms” (note the language) in 1942: these were communism, universalism, disinterestedness, and organized skepticism, or “CUDOS.” The framework took off in the early Cold War, although the first term (referring to common ownership of ideas) was now a bit awkward. This picture of ordinary science as non-ideological was extensively promoted by American higher education, diplomacy, and propaganda. It served as a constructive branding of regular science (a.k.a. American science) as distinctively superior to the defeated National Socialist regime (eugenics) and the rising Stalinist one (Lysenkoism). Ideology meant bad science, fringy science. [10] Rational, democratic citizens should concentrate on science when it worked as advertised.

¤

It was a nice consensus while it lasted. Slowly but quite clearly, in the 1980s and especially the 1990s, an interest in the fringe began to emerge. Or maybe it is more precise to say that the emphasis on science as it is most of the time — usually, “normally” — began to attenuate. Even within the bastion of the history of science, scholars no longer shunted the fringes and “pathologies” of science to the side as a disparate set of (somewhat unsavory) topics, but rather began to understand them as occasional and even common outgrowths of the process of producing knowledge.

Part of the reason historians of science (and scholars in associated fields) had concentrated so hard on science as it functioned normally was because science was supposed to be the best instantiation we had of a rational human activity. That vision was justified by a geopolitical environment that granted tremendous status to scientific research, even in basic sciences that seemed to have no military applications. Both the United States and the Soviet Union poured vast amounts of treasure into supporting science, and their scientific communities ballooned as a consequence. The result: Superb research. Starting in the mid-1980s, the dynamic shifted.

History of science was still a small field, but certain people noticed the change in emphasis. In the early 1990s, a small group of scientists and mathematicians launched a series of broadsides against the “relativists” in history and sociology of science who were, they believed, undermining science’s position in the public sphere, rendering it vulnerable to political or cultural attack. The most famous such episode was the 1996 publication by NYU physicist Alan Sokal of an article in the journal Social Text entitled “Transgressing the Boundaries: Toward a Transformative Hermeneutics of Quantum Gravity,” which proclaimed in jargonish prose the social construction of its object of study. Three weeks later, he told Lingua Franca — gossip zine to the academy — that it was a hoax designed to expose the bankruptcy of what passed for thought in this field. There was, shall we say, an uproar. [11] Sokal had predecessors. In 1994, biologist Paul R. Gross and mathematician Norman Levitt published Higher Superstition: The Academic Left and Its Quarrels with Science (Johns Hopkins University Press), which had quite a lot of derision to pass around. My concern right now is not who was right, who was wrong, or who was more cutting in their bon mots. My interest is in the timing.

A year before Higher Superstition, Congress had cut the funding for the Superconducting Supercollider (SSC) in Waxahachie, Texas, which already ran more than $4 billion over its $4.4 billion budget. [12] This was the great hope of the American, indeed the international, physics community: a machine that would finally discover the Higgs boson and fully confirm the boringly named Standard Model of particle physics. (The Higgs was eventually found by the Large Hadron Collider in Geneva in 2012.) A lot of finger pointing ensued. Maybe the politicians hadn’t sold it well enough. Maybe the biologists were to blame, as their Human Genome Project seized all the headlines. Maybe it was a sign that the Cold War had come to an end and physicists could no longer count on an infinite bar tab. I’m pretty sure it wasn’t canceled because of debates among historians of science.

That point about the end of the Cold War is important. The pressure that kept the idealized image of science in place on both sides of the Iron Curtain relaxed. In the final years of the Soviet Union, for example, astrology and charismatic faith-healing exploded in popularity, breaching prime-time television and the major publishers. [13] Of course science was still being funded in the United States — and, until the onset of the economic crisis of the early 1990s, also in the Soviet Union — but a growing proportion now flowed into practical fields like genetic engineering from the private sector, which paid attention to budget constraints. Science had not held its position as the exemplar of rationality by force of argument alone. Significant effort and resources were expended to keep it there: to preserve attention on how amazing it is (and it is amazing) that ordinary science can reveal extraordinary truths. When that force relaxed, the public image of science began to drift.

With all the concern about patents and IPOs, scientists no longer seemed quite as disinterested as they had before; to some, they appeared like a special interest, and special interests invited cynicism. The forms of science, its avenues of publication and mechanisms of argumentation, were by this time well established, and did not undergo significant change (even as publication itself moved online, and peer review lost much of its bite). Cynicism is a corrosive thing, and it will erode any idol, no matter how glistening. The techniques of “denialism” chronicled in Merchants of Doubt — the reliance on think tanks and calls for “more research” in order to expand uncertainty and minimize regulatory action, all through the ostensibly neutral and objective forms of professionalized science — dated back to the tobacco industry’s “Frank Statement to Cigarette Smokers” in 1954. It was not deployed by the fossil fuel industry with unrestrained force, nor was it fully unfurled in partisan politics, until the 1990s. [14] Things took off from there.

¤

This essay is not a nostalgic lament for times of yore, when the logical positivist vision of science was dominant and historians of science (and the general public) focused on scientific triumphs and achievements and ignored the messy byways of the fringe. A lot of good history was written in that period, to be sure, just like a lot of good science is being done now. My contention is that there is something skewed about focusing only on one aspect of science and not the others. When something is tilted like that, it’s the historian’s impulse to ask how it got that way. There exists no neutral moment when the public and scholarly discourse got science “right” — whether now or during the heights of the Cold War. In both periods, people were producing a foreshortened image.

The Cold War–era philosophical conception of science, derived from the Viennese logical empiricists, functioned much like a clean room for the public understanding of science. Because the quarry was rationality in its most pristine state, we were supposed to investigate science under ideal, even romanticized conditions. Then as now, of course, scientists everywhere were dealing with lot of genuine confusion and mess, which is part of making knowledge in any context. We have now left the clean room, and all of that messiness is open for public comment. For all the chastisement and invective chronicled at the start of this essay, this is actually an improvement in terms of obtaining a historically accurate, richly evidenced understanding of the past and present of science. We can only make clear decisions about science when we understand it as it really is, warts and all.

The difficulty, of course, comes if we approach this complicated, chaotic version of science still wearing Viennese goggles. It is highly misleading and even dangerous to pretend that we remain in the cultural clean room of the McCarthy era. If you think that science is of value because it is our best exemplar of rationality, and simultaneously you are barraged with the fraud allegations, fringe science movements, and denialism, then of course you would conclude that we are doomed. Science, our knight in shining armor, has some rust around the hinges — but it always did. The aspects of science that were impressive in the past are still there, but now they are seen in context. You should pay attention to all of it, and nobody need think you are fringy for doing so.

¤

Michael D. Gordin is a professor in Princeton’s history department. His latest book is On the Fringe: Where Science Meets Pseudoscience.

¤

Featured image: "COVID-19 No More Lockdowns Protest in Vancouver, May 17th 2020" by GoToVan is licensed under CC BY 2.0. Image has been cropped.

¤

[1] Reproducibility Project: Psychology; Susan Dominus, “When the Revolution Came for Amy Cuddy,” New York Times Magazine (October 18, 2017); Monya Baker, “Biotech Giant Publishes Failures to Confirm High-Profile Science,” Nature 530 (2016), 141.

[2] Nicolas Chevassus-au-Louis, Fraud in the Lab: The High Stakes of Scientific Research, tr. Nicholas Elliott (Cambridge, Massachusetts: Harvard University Press, 2019); Eugenie Samuel Reich, Plastic Fantastic: How the Biggest Fraud in Physics Shook the Scientific World (New York: Palgrave Macmillan, 2009); David Goodstein, On Fact and Fraud: Cautionary Tales from the Front Lines of Science (Princeton: Princeton University Press, 2010); Charles Gross, “Disgrace: On Marc Hauser,” The Nation (January 2012): 25–32.

[3] Mario Biagioli, “Fraud by Numbers: Metrics and the New Academic Misconduct,” Los Angeles Review of Books (September 7, 2020); Xavier Bosch, “Exorcising Ghostwriting,” EMBO Reports 12, no. 6 (June 2011): 489–494.

[4] Mark A. Largent, Vaccine: The Debate in Modern America (Baltimore: Johns Hopkins University Press, 2012). COVID-19 has brought some of these loopholes into question.

[5] Jeffrey Burton Russell, Inventing the Flat Earth: Columbus and Modern Historians (New York: Prager, 1991).

[6] The movement is sensitively depicted in Behind the Curve, dir. Daniel J. Clark (2018).

[7] A distinguished example is the mathematician Augustus De Morgan’s A Budget of Paradoxes (London: Longmans, Green, 1872) [Project Gutenberg link].

[8] Two recent books speak directly to this point: Seb Falk, The Light Ages: The Surprising Story of Medieval Science (New York: Norton, 2020); and Matthew Gabriele and David Perry, The Bright Ages: A New History of Medieval Europe (New York: HarperCollins, 2021).

[9] For a recent account, see David Edmonds, The Murder of Professor Schlick: The Rise and Fall of the Vienna Circle (Princeton: Princeton University Press, 2020).

[10] Audra J. Wolfe, Freedom’s Laboratory: The Cold War Struggle for the Soul of Science (Baltimore: Johns Hopkins University Press, 2018); George A. Reisch, How the Cold War Transformed the Philosophy of Science: To the Icy Slopes of Logic (Cambridge: Cambridge University Press, 2005).

[11] “The Sokal Hoax: A Forum,” Lingua Franca (July/August 1996).

[12] Michael Riordan, Lillian Hoddeson, and Adrienne W. Kolb, Tunnel Visions: The Rise and Fall of the Superconducting Super Collider.

[13] See, for example, Joseph Kellner, “As Above, So Below: Astrology and the Fate of Soviet Socialism,” Kritika: Explorations in Russian and Eurasian History 20, no. 4 (fall 2019): 783–812.

[14] Chris Mooney, The Republican War on Science (New York: Basic Books, 2005).

LARB Contributor

Michael D. Gordin is a professor of history at Princeton. He has done research on the early development of the natural sciences in Russia in the 18th century, biological warfare in the Soviet Union, the relationship of Russian literature to the natural sciences, Lysenkoism, Immanuel Velikovsky, pseudosciences, the early history of the atomic bombs and the Cold War, Albert Einstein in Prague, the history of global scientific languages, the life of Dmitri Medeleyev, and the history of the periodic table.

LARB Staff Recommendations

Every Age Gets the Mythology It Deserves

Colin Burgess’s “The Greatest Adventure: A History of Human Space Exploration” fails to take off.

The Sleep of Reason Produces Cats

The so-called “scientific method” may be a cage of sorts.

Did you know LARB is a reader-supported nonprofit?

LARB publishes daily without a paywall as part of our mission to make rigorous, incisive, and engaging writing on every aspect of literature, culture, and the arts freely accessible to the public. Help us continue this work with your tax-deductible donation today!