The Future, Revisited: “The Mother of All Demos” at 50

How the ’60s counterculture gave birth to personal computers and the vast tech industry that builds and sells them.

By Andy HorwitzDecember 8, 2018

Part 1: The Future Revealed

A MILD-MANNERED ENGINEER stands onstage at San Francisco’s Civic Auditorium. A massive video screen looms behind him, displaying a close-up of his face in the lower right half of the screen, with a close-up of his computer display superimposed over his face to the left. Introducing his team, he sounds a bit nervous, saying, “If every one of us does our job well, it’ll all go very interesting, I think.”

He starts by typing text, and then copying and pasting the word “word” multiple times, first a few lines, then paragraphs. He cuts and pastes blocks of text. He makes a shopping list his wife has requested — bananas, soup, paper towels — creating numbered lists, categories and subcategories, using his cursor to move around the document. Narrating as he works, he sounds not unlike Rod Serling. When he makes the occasional self-deprecating joke, we hear genial laughter from the audience.

Today, this presentation would be completely unremarkable. But it’s not 2018 — it’s December 9, 1968. The engineer is Douglas C. Engelbart, founder of the Augmented Human Intellect Research Center at the Stanford Research Institute, and nobody in his audience — or in the history of the world — has ever seen anything like it before.

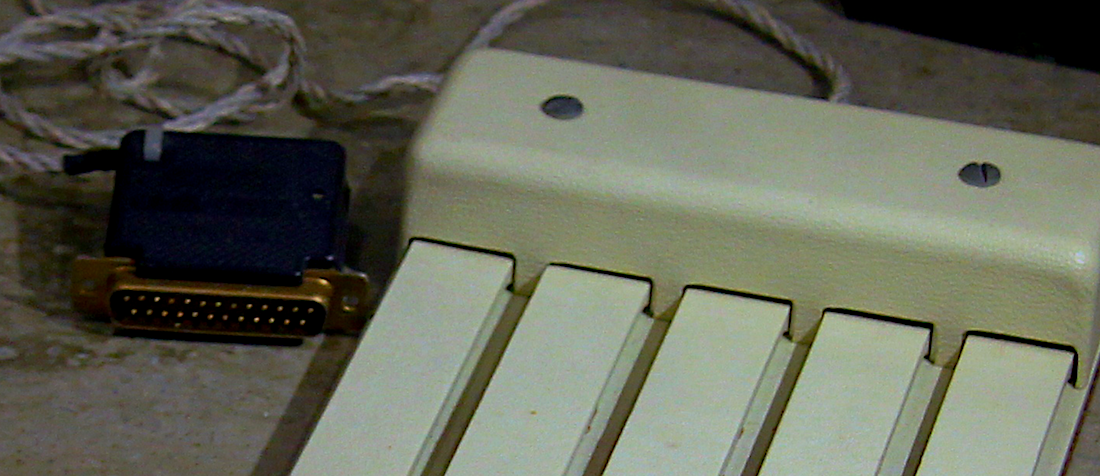

Over the course of the next 90 minutes, seated at a workstation custom-designed by the research division of Herman Miller and supported by a team of programmers, engineers, and audiovisual technicians, Engelbart introduces the world to the oN-Line System (or NLS) his team has invented. Using the newly invented mouse and keyboard, he demonstrates all the things it can do, things we today take for granted as the necessary tools of everyday work and play: word processing, hypertext and linking, windows, view control, collaborative working, revision tracking — basically, the entire world-to-come of networked personal computing.

As I sit in my living room, typing this essay on my laptop, it is difficult to conceive just how radical a proposal this was. According to tech writer John Markoff, in his 2005 book What the Dormouse Said: How the Sixties Counterculture Shaped the Personal Computer Industry, for the people gathered in the room that day, “[t]he relationship between man and computer had been turned upside down.”

So how is it that so few people outside of the tech sector have heard of Douglas Engelbart, except possibly — and reductively — as the inventor of the mouse? How could the man at the center of what has been retroactively dubbed “The Mother of All Demos” have fallen into such obscurity?

One part of the answer may well be Engelbart’s personality. By his own admission he could be single-minded, stubborn, and intense. He was a visionary, intent on designing human-machine systems to help people navigate complexity, who was himself often incapable of explaining his vision simply and clearly to others. He was notoriously uncompromising and prickly in pursuit of his vision, an advocate for collaboration who was not a good collaborator, known for ending discussions with, “You just don’t get it.”

Personality aside, part of the answer to his paradoxical obscurity may be the very success of his presentation itself. The “Mother of All Demos” was produced in part by Stewart Brand, publisher of The Whole Earth Catalog, producer of The Trips Festival (an outgrowth of Ken Kesey’s Acid Tests), and later founder of the WELL (Whole Earth ’Lectronic Link), one of the first online communities. In his 2014 book The Innovators: How a Group of Hackers, Geniuses, and Geeks Created the Digital Revolution, Walter Isaacson writes:

Thanks to Brand’s instincts as an impresario, the demo […] became a multimedia extravaganza, like an Electric Kool-Aid Acid Test on silicon […] unchallenged, even by Apple product launches, as the most dazzling and influential technology demonstration of the digital age.

This was a new type of presentation, produced by a new type of impresario at a moment of profound cultural change. Given the context — San Francisco in 1968 — one might consider “The Mother of All Demos” as akin to rock impresario Bill Graham’s contemporaneous concerts at the Fillmore Auditorium featuring Muddy Waters or Howlin’ Wolf. If Engelbart represents the mild-mannered but obstinate and complex computer engineer from the black-and-white world of postwar America, Stewart Brand represents the world that was coming into being: the avatar of a plastic fantastic future, a colorful, freewheeling, anti-authoritarian and anti-establishment iconoclast, an entrepreneurial showman to the core.

Brand’s “Electric Kool-Aid Acid Test on silicon” helped Engelbart demonstrate his vision, complex as it was. As a presentation, the “Mother of All Demos” is the opposite of complicated and difficult. It is easy to follow and mostly accessible, even to the layperson. And this is the direction the world was headed. Today, when people recall the “Mother of All Demos,” they remember it as a media event, a forerunner of flashy product launches and TED Talks, an intimation of the slick, media-saturated, hyper-marketed world to come — a world that doesn’t easily accommodate a man like Douglas Engelbart.

Born in Portland, Oregon, in 1925 and raised during the Depression, Engelbart was drafted into the Navy near the end of World War II, continuing to serve after the war was won. In 1945, stationed in the Philippines as a radar operator, he pored over whatever reading material he could get. It was here that he first encountered Vannevar Bush’s seminal essay from The Atlantic, “As We May Think.” Bush poses the question that would come to define postwar governmental research: after being so involved in the war effort, “what are scientists going to do next?” He asks, “Now, as peace approaches, one asks where they will find objectives worthy of their best?”

Bush’s answer to this question is, in part, computers. He anticipates the growing challenges of knowledge workers in a technologically advanced society and the need for new machines to support them, to free them from intellectual drudgery to pursue ever more sophisticated, higher-order thinking. He vividly imagines what that machine might look like:

Consider a future device for individual use, which is a sort of mechanized private file and library. It needs a name, and, to coin one at random, “memex” will do. A memex is a device in which an individual stores all his books, records, and communications, and which is mechanized so that it may be consulted with exceeding speed and flexibility. It is an enlarged intimate supplement to his memory.

One can imagine Engelbart musing upon Bush’s “memex” when he returns to civilian life and completes his education, including an MS and PhD at Berkeley. Bush’s “memex” is there in 1951 when Engelbart has a kind of existential crisis, wrestling with what his life’s work should be, and realizes that, “[i]f in some way, you could contribute significantly to the way humans could handle complexity and urgency, that would be universally helpful.” Since he had lived through the great social cataclysms of the Depression and World War II, it makes sense that Engelbart would take up the mantle of science in pursuit of the public good and a better world.

And so, when Engelbart takes the stage in 1968, he is sharing his vision “to boost collective intelligence […] to collectively solve urgent global problems,” a vision of the future of computing that is deeply collaborative and dedicated to improving people’s lives. But it is only the narrowest part of his vision that actually catches on: a personal computer, made for individuals — and we are still living with the ramifications of this diversion.

Which raises the question: what happened?

It helps to look more deeply at the context in which Engelbart first presented his vision. The “Mother of All Demos” was jointly sponsored by the Advanced Research Project Agency (ARPA), The National Aeronautics and Space Agency (NASA), and the Rome Air Development Center (Air Force). But as the ’60s drew to a close, government funding for Engelbart’s research began to dwindle. In 1970, Xerox opened its famed research center, PARC, in Palo Alto, signaling a shift toward private sector funding. Bob Taylor, formerly of NASA and an early supporter of Engelbart’s, ended up managing the Computer Science Laboratory at Xerox PARC, where a number of Engelbart’s ideas continued to be explored — and realized — within the private sector. It was on a visit to Xerox PARC that Steve Jobs and Bill Gates discovered the GUI and the mouse, setting them on their respective paths as pioneers of the personal computer.

All technology is a product of its time; while specific material and conceptual elements of Engelbart’s work carried over — the mouse and keyboard, windowing, text editing — his wider vision of computers to support networked collaboration for tackling big, complex problems didn’t resonate with younger colleagues. This was the Bay Area in the aftermath of the ’60s, and mainstream society was seen as rigid and repressive, silencing free speech, stifling creative expression, and compelling conformity. Distrust of the government and of large corporations — the military-industrial complex — was widespread. The ’60s valorization of radical self-expression and personal freedom — in the form of social, sexual, and chemical experimentation — had enshrined the ideal individual as iconoclastic, anti-establishment, anti-authoritarian, and free. And it was this pivotal cultural moment that gave birth to the personal computer.

This new technology came to represent autonomy from the technocratic Establishment, from large mainframe computers located in a lab. A personal computer was yours — you didn’t have to get anyone else’s permission to use it. Whether you were a computer scientist at Xerox PARC or a hacker “hobbyist” in Menlo Park, it was believed that the PC revolution was going to transform society, education, creativity, and the world as we knew it, making each person, on his own terms, self-reliant and free.

In this shift, we see that the “Mother of all Demos” was at once the apex of Engelbart’s career, and the beginning of the end. He may have been onstage, but Stewart Brand was waiting in the wings. The American society that valued government-supported research for the public good was being replaced by the anti-government ideas and attitudes of the counterculture.

Part 2: Think Different

It’s January 22, 1984. On televisions across America, an athletic young woman with toned physique, white-blonde hair, and a look of fierce determination runs through a drab, gray, dystopian industrial landscape, pursued by the Thought Police. In red shorts and a white T-shirt emblazoned with the picture of a Macintosh, she races past lines of defeated, listless proles, some marching in unison, others seated in front of a massive screen featuring in close-up an ominous-looking Big Brother gravely intoning:

We have created, for the first time in all history, a garden of pure ideology — where each worker may bloom, secure from the pests purveying contradictory truths. Our Unification of Thoughts is more powerful a weapon than any fleet or army on earth.

Whipping the sledgehammer around her head like an Olympian winding up for the hammer toss, she launches it at the screen, which smashes to bits in an explosion of blinding light and smoke. The explosion generates a wind that blows through the hypnotized masses and, one infers, awakens them, setting off the revolution. As the dust settles, text scrolls past as a man’s calm, cultured, but persuasive voice informs us: “On January 24th, Apple Computer will introduce the Macintosh. And you’ll see why 1984 won’t be like 1984.”

Only three years earlier, almost to the day, Ronald Reagan famously announced, in his 1981 inaugural address: “In this present crisis, government is not the solution to our problem; government is the problem.” In 1983, the term Yuppie, a snarky acronym for “Young Urban Professional,” entered common parlance. While “Yuppie” would become a catchall for anyone image-obsessed and aspirational, it was particularly aimed at the hippies who had “dropped out” in the 1960s and ’70s, only to drop back in in order to cash in. This type was personified by Jerry Rubin, former radical leader of the Yippie Party, who, in 1980, went to work on Wall Street as a stockbroker. The term “Me Generation” may be overly tidy journalistic shorthand, but it is useful for describing the experiences of the cohort that was born into the unprecedented affluence of the postwar period, which provided the safety and comfort to both question prevailing social assumptions and to pursue other values, such as individual creativity and self-realization.

So, while Apple’s “1984” ad is, on its surface, a jibe at IBM’s market dominance, it is also, more significantly, a repudiation of the conformist American society that arose in the immediate aftermath of World War II. The new world, Apple tells us, will be about empowering the individual.

With its “1984” ad, Apple bottled the countercultural veneer of the collaborative “hacker” community and turned it into a product. The Macintosh performed a remarkable feat of triangulation, whereby an individual could realize his or her potential through self-expression using cutting-edge technology while also rebelling against authority — all by purchasing a luxury good. Fashionable consumerism as individual rebellion. By a kind of alchemical magic, Apple’s ad neatly and unobtrusively aligns countercultural ideas of individual liberty and anti-establishmentarianism with Reagan-era conservative notions of “individual responsibility” and “small government.” The culture wars notwithstanding, it doesn’t matter which side you think you are on: in the future everyone needs a computer.

The launch of the Macintosh captured a zeitgeist and established a value set for the tech sector, one that has been adopted widely throughout American society: “think different,” be iconoclastic, pursue your individual vision in spite of all obstacles, disrupt, innovate, dominate. Ironically, the ad’s rejection of the previous generation’s “groupthink” was, in effect, a rejection of Big Government — including the very federal programs that invested in tech research in the 1940s, ’50s, and ’60s, and sent a generation of people to college on the GI Bill, including Doug Engelbart. That world, Apple now declared, was obsolete.

Part 3: From Cyberia to Snapchat

While the PC business grew into a full-blown industry during the 1980s and early ’90s, a small but vibrant subculture of hackers persisted at the intersection of computers and psychedelic drugs. These mutant rebel children of Timothy Leary and William Gibson dreamed of living an impossible future in the present. Smart drugs, raves, zines, Survival Research Laboratories, Burning Man, and early iterations of virtual reality populated countercultural visions of a utopian anarchist society of cyberpunks and urban primitives living off the grid in self-sufficient solar-powered yurts — all connected by the internet.

I had fallen in love with the internet before it even existed, through reading science fiction. I couldn’t wait for the future to get here, so I could be a part of this extraordinary new world. I first visited the internet in 1994, using my friend’s computer and University of Washington account. I bought my first personal computer in 1996, a Macintosh Performa, and got online using AOL. I built my first personal website on earthlink.net not long after.

Few of us early netizens had any idea what was to come, of how quickly and completely the seemingly endless possibilities of the internet would be co-opted, consolidated, and ultimately dashed.

Anyone who lived through the late ’90s in Silicon Valley or Silicon Alley can tell you that it was a crazy time. Old businesses didn’t understand how the internet worked, but super-smart young guys who looked cool and “alternative” were pitching them ideas that filled their heads with dollar signs. Some of the Old Guard was openly disdainful. When Razorfish’s Jeff Dachis and Craig Kanarick appeared on 60 Minutes II in 1995, interviewer Bob Simon responded dismissively to Dachis’s assertion that his company “asked our clients to recontextualize their business” by saying: “You know, there are people out there, such as myself, who have trouble with the word recontextualize.” He pressed on, “Tell me what you do. In English.” The “Dot Com kids,” as Dachis and Kanarick were described on the program, thought the segment made the Old Guys look out of touch. The Old Guys thought it made the Dot Com kids look like cocky idiots. In a way, they were both right.

Soon, every start-up had a roomful of twentysomethings with a crazy-genius idea and a huge pile of money, along with one resident Old Guy whose main job was to make sure they didn’t blow all that money on drugs and parties and foosball before building their crazy-genius idea. Some ideas were stupid: remember Kozmo.com, the delivery service that would bring anything you wanted to your door — even just a Snickers bar — by bike messenger, for free? Others were just ahead of their time, like Pseudo.com, the online broadcaster that launched in 1993 but closed shop in 2001 due to lack of funds.

In his 2002 memoir about working at Amazon.com in the 1990s, 21 Dog Years: A Cube Dweller’s Tale, Mike Daisey recalls:

[T]here was the Old World and the New World, and a war was coming in which Amazon would play a vital role, vanquishing bad, brick-and-mortar corporations. We began to believe that by supporting Amazon.com we would be helping to crush chains and monopolies and faceless bureaucracies.

Here again we see the young future monoliths positioning themselves as rebels, punks, and revolutionaries. Daisey’s perhaps too kind assessment is that “we were hopelessly naïve.”

Even amid this irrational exuberance, even as the language of the counterculture was co-opted for corporate gain, a small subculture persisted with genuinely communitarian dreams of a non-commercial internet that would actually bring people together: bloggers. Justin Hall, recognized as one of the world’s first bloggers, wrote in an early post: “When we tell stories on the Internet, we claim computers for communication and community over crass commercialism. By publishing ourselves on the web, we reject the role of passive media marketing recipient.”

My first “official” blog post was in August 2000, when I was part of a small community of bloggers in New York City who started to socialize regularly (in real life). When we first met in bars or cafes, we would often introduce ourselves by our URLs. When I started blogging, I felt no restraint. The World Wide Web didn’t seem so wide, didn’t seem to encompass so much of the world; it did not feel crowded. Rather, it felt like a private place unlike any other, a place where I no longer needed to perform some version of myself. I could write truthfully, brutally, and sometimes beautifully about my life, expose my innermost self with only the handful of people with whom I had shared my URL. Even after blogging became a “thing,” it still felt authentic; I still felt that I could be genuinely myself online.

In the late 1990s, by virtue of having taught myself HTML, I got hired as interactive producer and brand strategist at a global ad agency. On September 11, 2001, I went to work as usual at the Woolworth Building, two blocks from the World Trade Center. It was a beautiful day, warm, with a clear blue sky. I got to work around 8:30 a.m., sat down at my desk, and turned on my computer. Then I heard a loud bang. I managed to write one blog post before we evacuated the building, earning the dubious distinction of being the first person on the planet to blog 9/11.

As far as I know, this was the first time that a global event of this scale was reported on, in real time, by eyewitnesses, self-publishing on the internet. I proceeded to blog for the next week. I got supportive, compassionate emails and comments on my posts from all over the world. From that experience I came to believe, if only for a little while, in the power of the internet to connect people in meaningful ways, to help us find each other in times of need.

Unfortunately, the subsequent economic crash burst the tech bubble, and the Old Guys weren’t as willing to throw their money at cocky kids anymore. The tech sector was relatively slow to rebound.

Into this vacuum stepped Nick Denton, who started hiring New York City bloggers to shape the editorial voice and direction of Gawker.com, launched in December 2002. A few months later, Google acquired Ev Williams’s foundering company Pyra Labs, home to Blogger. Suddenly, blogging was a mainstream phenomenon, with Blogger a part of Google’s ever-expanding suite of services and a slew of competitors, such as LiveJournal, entering the field. The launch of Gawker and its related properties legitimized blogging as a force in the media sector. Old Media publishers were suddenly scouring blogs for new voices to whom they could offer book deals or were launching blogs for their existing brands, often developing proprietary content management systems. Platforms like Tumblr evolved, and Squarespace made it easier and easier for anyone to build a personal website.

And blogging was just the tip of the iceberg. The first social network I joined was Friendster in 2002. In retrospect, the platform launched too early and nobody quite knew what to do with it. Before people could figure it out, MySpace launched in 2003 and basically ate Friendster for lunch, becoming the biggest social network in existence from 2005 to 2009 (coinciding with its purchase by Rupert Murdoch’s News Corporation). In September 2006, Facebook opened its doors to anyone over 13 with an email address, and the rest is history. Twitter, Instagram, Snapchat, and the rise of mobile technology transformed social networks into social media, creating an entirely new mediaverse populated and powered by user-generated content, all owned by a very few massive corporations presided over by a small number of billionaires.

But what about the technology that made this all possible? The cyberpunks of the ’80s and early ’90s would probably have been surprised to learn that the incipient internet of their dreams was made possible, in no small part, by a decidedly moderate junior senator from Tennessee. Al Gore Jr. was one of the so-called “Atari Democrats,” a group of congressmen united by their belief that investment in the tech sector would be their generation’s New Deal. While this vision was markedly different from the traditional Democratic commitment to social programs, in that it was rooted in the neoliberal belief in free markets, Gore and his colleagues nonetheless saw that the government had a significant role to play in fostering technological innovation.

Inspired by the role his father, Senator Al Gore Sr., had played in passing legislation to build the United States’s physical highway system in the 1950s, Junior set out to build the “information superhighway.” He did this by crafting and helping to pass the Gore Bill, otherwise known as the High Performance Computing Act of 1991, which allocated $600 million to create the National Research and Education Network and, most significantly, the National Information Infrastructure (or NII).

This legislation led to technological advances that made the internet as we know it possible, including the creation of the Mosaic browser. Released on January 23, 1993, Mosaic opened the internet and the World Wide Web to the general public for the first time. Since 2004, such access has supposedly become “democratized.” But ownership of the underlying architecture, the platforms that serve as the internet’s “public spaces,” and the digital tools that serve as the means of production and communication, is now entirely privatized. This is a far cry from ARPANET, the first electronic computer network, which was launched in 1969, connecting Doug Engelbart’s Augmented Research Center with the computer lab at UCLA. It is also hard to see how this arrangement furthers the public good that projects such as Gore’s and Engelbart’s were purportedly meant to serve.

Today, everyone is a “content creator” or an “entrepreneur”; everyone is meant to “think different,” to be iconoclastic, to pursue their individual vision in spite of all obstacles, to disrupt, innovate, and dominate. But we do all this as consumers in a marketplace, not as citizens in a republic. The social contract has been broken, the social safety net dismantled, and social media is anything but social — it is, by design, the exact opposite.

When I look at what the connected world has become, I can’t help but feel that Mike Daisey was right: “We were hopelessly naïve.” And the more I learn about Douglas Engelbart, the earliest days of the personal computer, and the internet, the more I believe that the technology that currently shapes our experience of the world is designed based on unquestioned assumptions and ideologies. By questioning them now, perhaps we can change the future.

Part 4: The Future, Revisited

The easy story to tell about the “Mother of All Demos” is how cool it was, how Brand’s influence made it a true multimedia spectacle, how Engelbart was a genius ahead of his time. All of those things are true. But the harder story is that of the path not taken.

The underlying design premise of the personal computer, in fact of all the tech that permeates our lives, is an experience in which the “user” is imagined as an individual, one who expects a “personalized” experience. Simplicity is assumed to be a virtue, the interface is supposedly easy to use, and complexity is hidden. Every website, every product interface, every algorithm, is designed to work for individuals, in isolation. From customized playlists to “friend suggestions” to Amazon recommendations to the design of your phone — all are conceived within the isolating framework of privatized experience. Even so-called “social” media is geared to “share” what individuals have created, not to co-create together. At all points, individual experience is privileged over collaboration.

In her 2015 book Reclaiming Conversation: The Power of Talk in a Digital Age, sociologist Sherry Turkle calls attention to the term “friction-free,” which is presumed to be the salient attribute of optimal “user experience.” Everything should be easy to understand and easy to use and should present no uncomfortable obstacles. The ubiquitous tech mandate to create “friction-free” experiences diffuses into everyday life, driving people to avoid the slow, messy, and difficult processes of interpersonal communication, thereby exacerbating our sense of social isolation.

Engelbart’s stated goal was to “boost collective intelligence and enable knowledge workers to think in powerful new ways, to collectively solve urgent global problems.” But rather than enabling people to navigate complexity, much of today’s technology seems aimed at trying to remove complexity from the world entirely.

The cost of our desire for “friction-free” experiences is palpably evident in our approach to public life and the messy business of democracy. As the project of dismantling government that began in earnest in 1980 continues apace, it is worth remembering that it was the materially affluent and stable society of postwar America that made the tech sector as we know it today possible. It is ironic that a sector built on a distrust of centralized power — either corporate or governmental — continues to consolidate power and resources. It is equally ironic that a sector that in so many ways owes its very existence to government support and a prosperous postwar society has played such a pivotal role in the disintegration of that society.

In 1996, John Perry Barlow wrote “A Declaration of the Independence of Cyberspace.” Calling himself a “cyberlibertarian” activist, Barlow was a founding member of the Electronic Frontier Foundation and sometime lyricist for the Grateful Dead — thus positioned at the juncture of tech and the counterculture. His manifesto begins: “Governments of the Industrial World, you weary giants of flesh and steel, I come from Cyberspace, the new home of Mind. On behalf of the future, I ask you of the past to leave us alone. You are not welcome among us. You have no sovereignty where we gather.”

Barlow conveniently overlooks the fact that cyberspace, as it currently exists, is not a public good but rather a commodity. He continues:

We have no elected government, nor are we likely to have one, so I address you with no greater authority than that with which liberty itself always speaks. […] Governments derive their just powers from the consent of the governed. You have neither solicited nor received ours.

Even as it alludes to the words and ideas of the United States’s founding documents, Barlow’s declaration elides one essential and profound difference: the signers of the Declaration of Independence of the United States were proposing to replace a monarchy with a democratic government. They were building a country, not a consortium of privately held businesses.

In light of Barlow’s declaration, we can see merit in the argument that Facebook is a kind of country. In fact, it has been said that, if Facebook were a country, it would be the largest in the world. I doubt that when Mark Zuckerberg first built Facebook he intended to build the country of the future; yet what is a country, after all, but a vast, complex, interdependent social network? To put it another way: what if we consider Facebook from the perspective of governance, rather than commerce? At the moment, it is a failed state. What would it look like as a democracy? What do we do in our present crisis, when, to paraphrase Ronald Reagan, tech is not the solution to the problem, tech is the problem?

Watching the “Mother of All Demos” in 2018 provides a welcome opportunity not only to recognize the accomplishments of Douglas Engelbart but also to revisit his ideas about collaboration and, more saliently, about human beings and technology, since these ideas may help us navigate the precarious moment in which we find ourselves. At the very heart of Engelbart’s vision was a recognition of the fact that it is ultimately humans who have to evolve, who have to change, not technology.

In a 1995 interview for JCN Profiles entitled “Visionary Leaders of the Information Age,” Engelbart argues that, while tech (which he calls “the tool system”) has advanced beyond anyone’s wildest dreams, “the human system” continues to lag. He explains:

The scale of change [on the tool side was] going to increase by huge factors in the coming decades. It became apparent to me then that this human system side was in for massive, massive changes. […] Are we ready for viewing the scale of change on this [human] side that it will take in order to really, really harness what’s over here [tool side]? […] We better start learning and being explicit about this change in the human system rather than just letting the product people throw new stuff at us.

What if we really listened to him and looked much harder at the human system as a candidate for serious change?

Alan Kay, one of the pioneering computer scientists at Xerox PARC, once said, “I don’t know what Silicon Valley will do when it runs out of Doug’s ideas.” In 2006, when Engelbart was asked how much of his vision had been achieved, he replied: “About 2.8 percent.” That gives us a lot to work with.

Fifty years after the “Mother of All Demos,” Doug Engelbart’s vision is as relevant as it ever was. We are once again standing on the precipice of a new world, one that seems dangerously unstable and dark. What if, this time, we tried to do things differently?

¤

Andrew Horwitz is a writer living in Los Angeles.

¤

Feature image by New Media Consortium.

LARB Contributor

Andrew Horwitz is a writer living in Los Angeles. He founded the arts and culture website Culturebot.org in 2003, serving as editor until 2014. In 2014, he received a Creative Capital | Warhol Foundation Arts Writers Grant for his project Ephemeral Objects: Art Criticism for the Post-Material World. His writing has been published in The Atlantic, The Guardian, Art Practical, Theatre Forum, The Dancehouse Diary, Americans for the Arts’ ARTSBlog, The Live Art Almanac Volume 3, and Cultural Weekly, among other outlets. For more information visit his website at www.andyhorwitz.com.

LARB Staff Recommendations

The Crisis of Intimacy in the Age of Digital Connectivity

The internet’s quintessential, paradoxical message is “Only Connect.”

Delete Your Account Now: A Conversation with Jaron Lanier

Harper Simon asks philosopher Jaron Lanier about his latest book, “Ten Arguments for Deleting Your Social Media Accounts Right Now.”

Did you know LARB is a reader-supported nonprofit?

LARB publishes daily without a paywall as part of our mission to make rigorous, incisive, and engaging writing on every aspect of literature, culture, and the arts freely accessible to the public. Help us continue this work with your tax-deductible donation today!