Inspiration from the Luddites: On Brian Merchant’s “Blood in the Machine”

Three educators find inspiration for fighting automation in the classroom in Brian Merchant’s “Blood in the Machine: The Origins of the Rebellion Against Big Tech.”

By Antero Garcia, Charles Logan, T. Philip NicholsJanuary 28, 2024

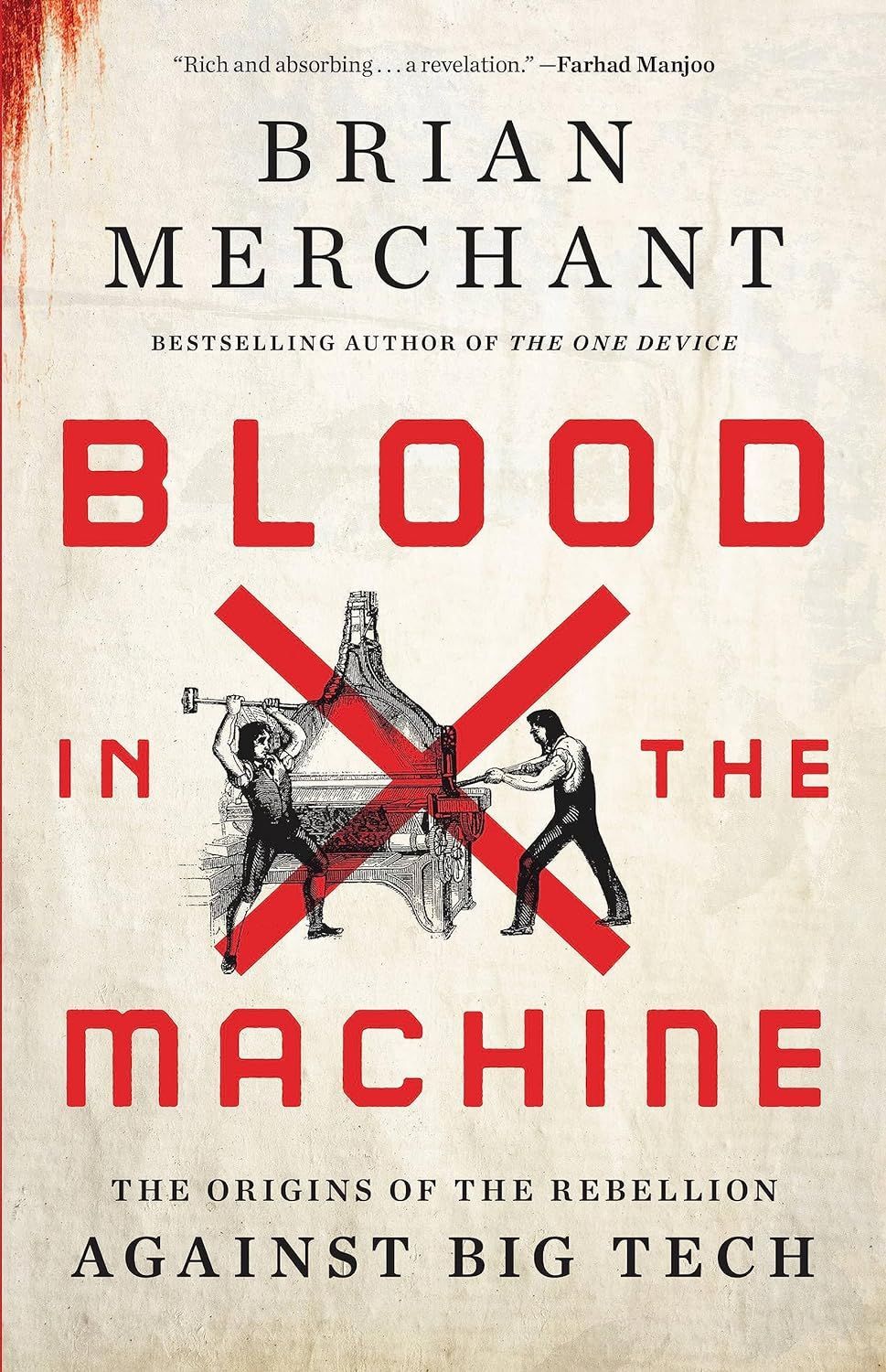

Blood in the Machine: The Origins of the Rebellion Against Big Tech by Brian Merchant. Hachette/Little, Brown and Co.. 496 pages.

A HAMMER is good for smashing gig mills to pieces. A humble traffic cone placed atop an autonomous car’s hood is good for wreaking a different kind of mayhem: making the car stop abruptly and inconveniently. But for those of us struggling to combat automation’s penetration into our nation’s schools, we need more than hammers and devices for shorting communication systems. We can’t smash the cloud computing infrastructure operated by Amazon Web Services that facilitates much of contemporary education. Nor can we pry apart the black-box algorithms narrowing students’ curriculum in the name of personalized learning. But we can do other things. Educators like the three of us and many of our colleagues—people who worry about Big Tech’s colonization of the American classroom—can look to labor resistance movements for inspiration.

A place to start is journalist Brian Merchant’s Blood in the Machine: The Origins of the Rebellion Against Big Tech (Little, Brown, 2023). He describes workers’ fights against powerful entrepreneurs and their profit-producing machines, aggressively deployed now for more than 200 years. The narrative dwells in particular on the Luddite uprising in the early years of the 19th century. Armed with hammers, picks, and pistols, the Luddites broke into textile shops and factories to destroy gig mills and power looms. These were not haphazard attacks. The Luddites were “organized, strategic, and intentional in their displays of power.” They destroyed machines that threatened not only the textile workers’ wages and employment but also their identities as skilled craftsmen.

Today, the label luddite is an epithet for someone afraid of technology and the change it can bring. Merchant’s book makes clear that Luddites did not fear automation in the sense of being afraid of the machines or longing for an idyllic past. On the contrary, as Merchant points out, clothworkers were often themselves intimately engaged in improving the technology they used. Some of them proposed paying for job retraining by taxing factory owners who implemented the automating machines, earning the workers the title of “some of the earliest policy futurists,” according to Merchant. These efforts—to use official channels at the local and parliamentary levels—failed, however. With their futures rapidly foreclosing, the clothworkers invoked the fictional Ned Ludd (alternatively, Ludlam), an apprentice stocking-frame knitter in the late 1700s who, the story went, responded to his master whipping him by destroying the machine. Inspired by his act of sabotage against a cruel employer, the Luddites campaigned to halt the spread of the “obnoxious machines.” Soon factory owners found threatening letters signed by Captain Ludd or General Ludd or King Ludd. The letters also allude to another hero of working people from Nottingham, Robin Hood. Merchant argues that the mutability of Ned Ludd served as an organizing symbol akin to a playful but potent meme.

Blood in the Machine elevates and contextualizes the Luddites’ history while also directly connecting their actions to contemporary challenges around an app-based gig economy. In today’s world, figures from the Luddite era find their heirs in livery drivers broken by Uber and in workers unionizing outside Amazon warehouses. Merchant draws other parallels too. He illuminates the similarities between the earliest factory owners and today’s Silicon Valley elite. Celebrity interlopers also make an appearance in Merchant’s story, from Lord Byron’s apparent passing interest in the Luddites to Andrew Yang’s short time in the spotlight for sounding the alarm about automation’s toll on workers’ prospects.

The next wave of automation, the three of us believe, should not be accepted passively. We can find inspiration from the Luddites and their distributed movement with its “openness [that] allowed different regions with different grievances and politics to accommodate Ned Ludd as their crusading avatar.” We can build solidarity in the manner of the Luddites, banding together to resist the forces of automation infiltrating our daily lives. Merchant’s message is even more urgent in the context of AI’s purported “inevitability.” We need to make its penetration of our schools something other than inevitable.

But here’s a problem: today’s automation is often immaterial; and it is embedded in one of the most important sites future workers are conditioned to accept—schools. Its creep into schools, and thus into early life, means that it naturalizes itself.

¤

According to a damning report from the ACLU, schools are the epicenter of the world’s most recent automation and surveillance revolution. The report details how the multibillion-dollar educational technology industry misleads schools about what its products can do. What does the ed-tech industry actually provide? Perhaps counterintuitively, precisely what critics have warned us about: more discrimination, more stress, and no verifiable improvements to safety. As detailed across the ACLU report, “student surveillance is not only ineffective as a safety measure, but it often harms students in the process and precludes schools from implementing more proven interventions.”

The ed-tech industry’s dubious record has not stopped schools from investing in junk technology that automates nearly every dimension of schooling, from attendance records to auto-grading and e-hall passes, from student-only, faux social networking platforms to mindfulness programs. To dramatize what this automation looks and feels like in education, we offer a vignette of a generic school in the United States in 2023 based on our fieldwork:

It’s 8:00 a.m. and another day in a United States high school is about to begin. Students hustle through the recently installed metal detector, which allegedly comes equipped with artificial intelligence that can identify weapons. The number of students being pulled aside to have their backpacks searched by a school resource officer only to find a school-issued laptop and a water bottle makes some students wonder just how intelligent these machines really are. Relieved that no one is flagged by the detectors on this particular day, the students move through the crowded halls to their classrooms.

The bell rings.

Inside their English class, students remove their hats and lower their hoodies. They look up at the state-of-the-art Verkada camera bolted to the classroom’s front wall. The camera streams video for the cloud-based facial recognition software the school is piloting to take attendance. The innovative technology, promised the sales rep, will remove the burdensome process of recordkeeping and improve daily efficiency. One student, a Black girl, reviews what she’ll say to her teacher because yesterday the system marked her absent. Would her teacher check with the substitute from yesterday, the girl wonders, and would the substitute remember? Did race shape this system’s oversight? The students put their hats back on and raise their hoodies.

The teacher welcomes everybody. She asks about their weekends. Her students want to know if she followed through on her promise to wait in line for the first screening of Beyoncé’s Renaissance film. She smiles. Of course—and it was so good! The classroom erupts with opinions. The teacher tells her students to open their laptops and complete today’s response question, which she used ChatGPT to create and then scheduled last night to appear in their learning management system.

Students reflect on how their favorite music has shaped their identities. Students use auto-complete to finish a thought. Some students, wary of being accused of searching for porn or being outed to their parents by the school’s monitoring software, avoid discussing their gender identities and sexual orientations. While her students write, the teacher rereads an email to her department head. She’s frustrated by the latest round of mandatory volunteering the school is asking of the teachers. Enough is enough; we need to be paid for our time, she thinks. She runs the email through a software’s tone detector. Her email scores low on confidence. She accepts the software’s recommended edits, rereads the email one last time, and clicks send.

The teacher tells the students to finish their thoughts. She projects her laptop onto the screen at the classroom’s front. A new chatbot’s interface appears. The teacher explains that for today’s final discussion of their protest music unit, they’ll be joined by three special guests: Billie Holiday, Fela Kuti, and Zack de la Rocha. They’ll be using the chatbot that embodies historical figures to conduct research about the three musicians and put them into conversation with each other. But they’ll need to check the chatbot’s responses. These machines, she warns, can’t be trusted.

Looking into the schools and classrooms of today, we can find some “smash”-able technology: the AI-equipped metal detector and the camera, for starters. They would make satisfying thunks. Like Ned Ludd’s army, teachers and students may find it invigorating, even cathartic, to demolish the machines that constrain what they do and who they are allowed to be.

Less obvious is what to do about automated systems impervious to blunt force. Take a school’s monitoring software—a report from the Center for Democracy & Technology shows that the platforms often result in teachers adopting punitive practices and nondominant students experiencing increased harm, much as happened with the Black girl in our vignette. Here, employing the Luddite strategy to wreck a school-issued laptop is insufficient; the software exists beyond the device.

For Merchant, the diffuse, often invisible ways in which automation operates today accounts for one reason why contemporary workers’ fury has not translated into Luddite-like violence. People’s incomplete understanding may be changing, though, suggests Merchant: “[T]he modes of the modern factory owners’ exploitation are becoming clearer to workers again,” hence their recent targeting of Google’s private buses and Cruise’s driverless cars.

We are similarly seeing promising signs of ferment in how students are confronting the outsourcing of their education at large. High school students walked out of school to protest a personalized learning platform funded by the Chan Zuckerberg Initiative; college students successfully petitioned their university to end its contract with Proctorio, an online proctoring company; young people chanted “Fuck the algorithm” outside of the UK’s Department for Education; teachers in San Francisco rallied after a new payroll system threw the district into a state of emergency. Resistance is not futile.

As bastions for instilling civic values and decision-making, the lessons we teach now about automation, surveillance, and human resistance are among the most important that schools can offer. Merchant reminds us that the Luddites only “lost” due to the historical rewriting of their efforts and aims. Yet if the lessons of the Luddites are limited to breaking things to defend a way of life, then we have done teachers and students a disservice. There are other more creative—and more playful—avenues for subversion.

¤

The Luddites used the tools at their disposal and did so through collective action. Merchant details the day-to-day organizing efforts of the movement’s leaders. We are ushered into a clandestine world of codes and oaths, of backroom meetings and nighttime training. The scheming makes for entertaining reading. But beneath the private planning and public sabotage lurks a more lasting lesson: movements to dismantle automation’s physical infrastructure often depend on building relational infrastructure. Tight-knit communities are extraordinarily important here: they buffered the Luddites from harm and fostered creative thinking rather than merely alienation among adherents and their allies. Increasingly finding themselves wrung out by those in power, these communities coalesced around shared causes that overlooked intragroup differences. This opened space for women, Merchant tells us, to claim the nom de guerre Lady Ludd and charge into markets to demand fair food prices from shop owners and food suppliers. It worked. The “auto-reductions,” as they were called, demonstrate the power of people working together to force change. Similarly, resistance to automation can be creative and provide openings to bring myriad others into the tent.

In his rousing book Resisting AI: An Anti-fascist Approach to Artificial Intelligence (Bristol University Press, 2022), scholar Dan McQuillan speaks to the specific role that organizing and unions play in this present moment. The tasks for workers’ and people’s councils, he writes, “is to forge, through struggle, a new kind of hammer to disrupt the naturalization of AI.” Historical Luddism, he goes on, shows us that a “commitment to resistance […] creat[es] space for the construction of the commons.” Ultimately, the Luddites’ militancy and commitment to resistance might be a necessary entry point for how laborers—and teachers, students, and caregivers—can take an antagonistic stance toward AI and automation, and create a new “commons.” The real work in schools includes building relationships of care for one another that tap into a sense of creative play, and into the capacity of young people to imagine alternative and hopefully better worlds.

Blood in the Machine arrives at a time when powerful venture capitalists are publishing techno-optimist manifestos that gush with the romance of the machine. Merchant’s book is a counterpunch: the Luddites and gig workers demonstrate how mindless automation might be stopped. Workers can “learn” to intervene at the local level. They can resist AI’s naturalization and their own dehumanization. They can resist the kind of “bossware” that insidiously monitors people’s communications and quantifies their productivity. We want to emphasize the word learn (and the word play, with its emphasis on creativity) to highlight the vital role of education—along with a new “commons”— in beating back automation’s sprawling tentacles.

LARB Contributors

Antero Garcia is an associate professor in the Graduate School of Education at Stanford University and the vice president of the National Council of Teachers of English. His most recent books are Civics for the World to Come: Committing to Democracy in Every Classroom (Norton, 2023) and All Through the Town: The School Bus as Educational Technology (University of Minnesota, 2023).

Charles Logan is a doctoral candidate in the Learning Sciences program at Northwestern University.

T. Philip Nichols is an associate professor of English Education at Baylor University. He is the author of Building the Innovation School: Infrastructures for Equity in Today’s Classrooms (Teachers College Press, 2022) and co-editor, with Antero Garcia, of Literacies for the Platform Society: Histories, Pedagogies, Possibilities, (forthcoming from Routledge).

LARB Staff Recommendations

Drowning in Mediocre Data: On Kyle Chayka’s “Filterworld”

T. M. Brown reviews Kyle Chayka’s “Filterworld: How Algorithms Flattened Culture.”

From Robots to iBots: The Iconology of Artificial Intelligence

W. J. T. Mitchell asks, What kind of intelligence does AI actually represent?

Did you know LARB is a reader-supported nonprofit?

LARB publishes daily without a paywall as part of our mission to make rigorous, incisive, and engaging writing on every aspect of literature, culture, and the arts freely accessible to the public. Help us continue this work with your tax-deductible donation today!