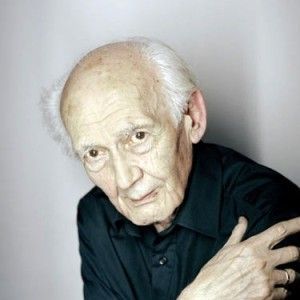

Disconnecting Acts: An Interview with Zygmunt Bauman Part I

Part I of a two-part interview with one of Europe’s foremost thinkers, Zygmunt Bauman.

By Arne De Boever, Efrain KristalNovember 11, 2014

IN THE FOLLOWING INTERVIEW, Efrain Kristal, professor and chair of UCLA’s Department of Comparative Literature, and LARB’s philosophy/critical theory editor Arne De Boever talk with the renowned Polish social theorist Zygmunt Bauman about his entire work. Click here to read Part II.

¤

I/ Modernity, Postmodernity, Liquidity

ARNE DE BOEVER / EFRAIN KRISTAL: Did your military experiences as a young man — particularly those involving the liberation of your native Poland in World War II — have a bearing on your earliest ideas when you became a professor of sociology at the University of Warsaw?

ZYGMUNT BAUMAN: They must have, mustn’t they? How could it be otherwise? Be they military or civilian, life experiences cannot but imprint themselves — the more heavily the more acute they are — on life’s trajectory, on the way we perceive the world, respond to it and pick the paths to walk through it. They combine into a matrix of which one’s life’s itinerary is one of the possible permutations. The point, though, is that they do their work silently, stealthily so to speak, and surreptitiously — by prodding rather than spurring, and through sets of options they circumscribe rather than through conscious, deliberate choices. Stanisław Lem, the great Polish storyteller as well as scientist, tried once, not entirely tongue in cheek, to compose an inventory of accidents leading to the birth of the person called “Stanisław Lem,” and then calculate that birth’s probability. He found that scientifically speaking his existence was well nigh impossible (though probability of other people’s births — scoring no better than his — was also infinitely close to zero). And so a word of warning is in order: retrospectively reconstructing causes and motives of choices carries a danger of imputing structure to a flow, and logic — even predetermination — to what was in fact a series of faits accomplis poorly if at all reflected upon at the time of their happening. Contrary to the popular phrase, “hindsight” and “benefit” do not always come in pairs — particularly in autobiographic undertakings.

I recall here these mundane and rather trivial truths to warn you that what I am going to say in reply to your question needs to be taken with a pinch of salt.

From early childhood I was enthused with physics and cosmology and intended to devote my life to their study. Perhaps I would’ve tried to follow that intention if not for the vivid exposition of the human potential of inhumanity: the ugly monstrosity of war, of evil let loose, of the horror of continuously bombarded roads crowded with refugees, of the desperate yet vain attempts to escape the advancing Nazi troops leading ultimately to the calamity of exile which was, as I remember being then aware, also the lifesaving marvel — all coming in quick succession. Then the point-blank exposure to the vagabond’s experience of many and different ways of being human — of a variegated patchwork of many and diverse modes of life, none of which appeared to be blameless and attractive enough to be uncritically, wholeheartedly embraced. This sort of acquired early-in-life experiences might have (might — they didn’t have to; the commonality of such experiences did not evacuate the faculties of physics or astronomy) to influence the gradual yet steady drift of my attention from the black holes and the stars born and dying up there to the black holes and stars born and dying down here.

That drift was considerably accelerated upon my return from the exile — with the Polish Army formed in the USSR — by what I found in my impoverished country, haunted by chronic underemployment and bursting with social inequities and class and tribal animosities already before the German invasion, but now in addition devastated, humiliated, and demoralized by the years of foreign occupation as well as run down, scorched, and gutted by passing frontlines. Most certainly, I found no shortage of black holes yelling to be filled and toxic debris of dead planets yearning to be disposed of. No wonder I switched to social and political studies. Having left the army, I took to my studies full time. From then on, the institutionalized logic of the academe took over the steering wheel in navigating my trajectory, and so the rest was a dull story of its routine operations.

It “stands to reason” to connect the experiences sketched above with what was to become my lifelong academic interests: sources of evil, social inequality and its impact, roots and tools of injustice, virtues and vices of alternative modes of life, chances and limits of humans’ control over their history. But is the quality of “standing to reason” a sufficient proof of being true? In his latest novel Ostatnie Rozdanie (Last Deal, 2013), a book that apart from being a fascinating story told in an exquisite prose is also a long meditation on the traps and ambushes, trials and tribulations that cannot but lie in store for people cheeky and insolent enough to dare an orderly, comprehensive, and convincing reconstruction and retelling of their life itineraries, the formidable Polish writer Wiesław Myśliwski writes:

I lived willy-nilly. Without any sense of being part of the order of things. I lived by fragments, pieces, scraps, in the moment, at random, from incident to incident, as if buffeted by ebb and flow. Oftentimes I had the impression that someone had torn the majority of pages out of the book of my life, because they were empty, or because they belonged not to me but to someone else’s life.

But, he asks, “Someone will say: what about memory? Is it not a guardian of our selves? Does it not give us the feeling of being us, not someone else? Does it not make us whole, does it not brand us?” — only to answer: “Well, I would not advise putting trust in memory, since memory is at the mercy of our imagination, and as such cannot be reliable source of truth about us.” Humbly, I accept.

Martin Jay once opined that the liquidity of my own life experiences has influenced my interpretations of liquid modernity. Having been in my life story a bird rather than ornithologist (and birds are not known to be particularly prominent in the annals of ornithology), I really don’t feel entitled to go beyond a rather banal observation that the experience of frailty of the settings in and through which I found myself moving must have (mustn’t it?) influenced what I have seen and how I saw it.

What impact did the circumstances of your departure from Poland have on your views on socialism when you made your new intellectual home in the United Kingdom? What are your impressions of Poland today?

My realization of the yawning fissure separating the socialist idea, which I wholeheartedly embraced, from the “really existing socialism” which I found difficult to swallow, occurred long before Rudolf Bahro coined the latter phrase in 1977 — and well before I was forced to leave Poland in 1968. One of my first publications of the British part of my academic life carried the title Socialism: The Active Utopia, which signaled its main message: the great historical accomplishment of the socialist idea was its acting as a utopia laying bare the social ills endemic in the status quo and spurring into remedial action. Without such a utopia presence, those ills would grow and proliferate uncontrollably with the moral standards of society together with quality of life bound to become the first, and perhaps the most regrettable, collateral victim of that growth. (Inadvertently, that old belief was to be retrospectively confirmed by the story of the Western societies after the fall of the Berlin Wall.) What derived from that message was another belief: that declaring any kind of status quo as the “socialist idea fulfilled” cannot but be a death knell to what was its major, indeed paramount role to be played in history. In the longer run, such a declaration would have inevitably stripped the socialist utopia of that role (just as in the case of another utopia — that of democracy). Allow me a brief quotation from that book: the socialist project, I wrote,

can no longer preserve its character as the utopian spiritus movens of history, whilst remaining the counter-culture of capitalism alone. Hence, such changes as become prominent in contemporary socialist thinking derive their inspiration and force from the critique of both the major established systems of modern society.

Those words were published in Britain, but formed and matured in Poland. And they also, I would say today, apply to the contemporary democratic thought.

Today, socialist utopia, with but few exceptions, is conspicuous mostly by its absence: but the absent are seldom influential, let alone capable of co-determining the tonus of human self-questioning and, in consequence, the direction of our shared human history. Well, contrary to Francis Fukuyama’s conjecture, history is anything but finished, and so the absence may yet prove but temporary. Signals of such possibility have been recently multiplying; among them, the rising alarms about the rampant, exorbitant, and for the last 100 years unprecedented growth of social inequality (note the wide and widening echo and impact of the alarms/manifestoes published by Amartya Sen, Martha Nussbaum, Joseph Stiglitz, Göran Therborn, Stewart Lansley, Danilo Zolo, Guy Standing, François Bourguignon, Daniel Dorling, Richard Wilkinson and Kate Pickett, Robert and Edward Skidelsky, Mauro Magatti and Chiara Giaccardi, Thomas Piketty, and the unstoppably rising number of others, too large already for my list, however long, to claim comprehensiveness) that at the moment seems to be the loudest among the currently sounding, probably the most timely and urgent, as well as perhaps the most seminal in the longer run.

Today’s socialist utopia, whenever and in whatever form it appears, shares the quandary faced currently by all utopias: if we succeed in pushing through our idea of what is to be done to make society better than it currently is, who is going to do it? The bane of socialist utopia as much as of the competing social programs is a severe and deepening crisis of agency. In the heyday of socialist utopia the agency of societal change seemed obvious and not a matter of contention: parties and movements plotted along the whole length of the political spectrum and believed (or rather tacitly, axiomatically assumed) that such agency is provided by the sovereign territorial state — deemed as it was to be adequately equipped with required volume of power (i.e., capacity of getting things done) and effective tools of politics (i.e., ability to decide which of proposed things need and so are to be done). Trust in the nation-state as a reliable historical agent caused the advocates of the socialist idea as much as their rivals to invest their hopes in taking over the state power and to focus their strategy on that task. Today, though, as Benjamin Barber crisply put it in another alert/manifesto titled If Mayors Ruled the World, “after a long history of regional success, the nation-state is failing us on the global scale. It was the perfect political recipe for the liberty and independence of autonomous peoples and nations. It is utterly unsuited for interdependence.” The reason of such dramatic turn can be traced, in a nutshell, to the growing separation between power and politics, resulting in powers emancipated from political constraints and controls and a politics suffering a constant, and growing, deficit of power. Powers, and particularly those among them most heavily influencing the human condition and humanity’s prospects, are today global, roaming ever more freely (to draw from the work of Spanish sociologist Manuel Castells) in the “space of flows” while ignoring at will the borders, laws, and internally defined interests of political entities — whereas the extant instruments of political action remain, as a century or two ago, fixed and confined to the “space of places.” Alternative “historical agents” are much in demand, the frantic search for them becomes a salient mark of the present moment, and one may surmise that until they are found and put in place, debating the models of a “good” or at least “a better” society will seem to be an idle pastime — and except in the extreme margins of political spectrum won’t arouse much emotion.

As the South African novelist and philosopher J. M. Coetzee in Diary of a Bad Year (2007) suggested, the traditional choice between “placid servitude on the one hand and revolt against servitude on the other” falls now into disuse, and no longer captures the attitude assumed by most of the electorate regarding those whom they elect to govern. A third attitude is fast growing in popularity and by now tends to be “chosen by thousands of millions of people every day”: “quietism, willed obscurity, or inner immigration,” as Coetzee puts it. It is tempting in my view to trace that tendency to the ever more pronounced breakdown of communication between the political elite and the rest. Two discourses, one of state politics and the other of the hoi polloi politics of daily life, run by and large in parallel and short-circuit only for sparse fleeting moments of unloading potentially explosive excesses of resentment and rancour, as well as a modicum of the otherwise unemployed and festering supplies of the popular will of political engagement.

In the year that preceded your retirement from the University of Leeds you published Modernity and the Holocaust (1989), a celebrated book in which you argue that the methods of the crimes committed against the Jews by the Nazis are a product of modernity, and that we are far from immunized from them. How can contemporary societies immunize themselves from these products of modernity?

My point was that modernity was a necessary condition of the Holocaust, though not its sufficient condition. It was necessary because a project of systematic and thorough destruction of a whole nation, an undertaking extended over huge territory and many years, required modern technology of industry and transportation as well as modern management (sometimes dubbed “scientific”) with its meticulous division of labor and strict bureaucratic rules of command and performance. What above all made that project intrinsically “modern” was its being a goal-oriented “project”; it seemed to follow Max Weber’s definition of modernity as the time of “instrumental rationality,” that is of the tendency and capability of selecting the best (most efficient and least costly) means to set objectives.

Genocides as such are not modern inventions (even if that general name for the practices of mass murder and extinction was coined after the Holocaust and under its influence). Indeed, it is possible (even if still contentious among the experts) that the 40- to 45-thousand-year-old conquest of Europe by Homo sapiens started from the extinction of Homo neanderthalensis — but according to the latest research conducted by Professor Chris Stringer of the London Natural History Museum it took about 5,000 years to accomplish (and not “merely” 500, as previously thought). But genocides of the past (as well as at present — in the parts of the globe heretofore by modern standards “underdeveloped”), related to tribal or religious hostilities, tended and continue to be either one-off bursts of soldiery rapacity and wantonness following the capture of hostile new territory, or also short-lived, pogrom-style explosions of hostile emotions against neighbors. Holocaust, a strictly modern phenomenon, stood out from other genocides by relying on cold-blooded, cool-headed calculation and planning while trying its best to make the presence or absence of passions irrelevant to the success of the project. This is why it could be initiated, designed, and planned in the very heart of modern civilization, in a country famous for its philosophers, musicians, scientists, and poets.

As we know from Sigmund Freud’s studies and their follow-up by Norbert Elias, an integral part of modern history was also the “civilizing process” — consisting in suppressing manifestations of hostility, aggression, cruelty, bloodthirst or at least eliminating them from view in daily interactions. One of the effects of that process was rendering the show of emotions in public shameful — something to be avoided at all costs, in however stressful a situation. Note that the objects of prohibition were the manifestations of emotions, not emotions as such. Erving Goffman’s “civic inattention” demanded a demonstrative lack of personal interest in people around (as avoiding eye contact or close and intrusive physical proximity), rather than a moral reform; that inattention was a stratagem meant to enable cohabitation of strangers in modern densely populated cities; cohabitation free from mutual violence and fear thereof. It bore all the marks of a cover-up, rather than elimination of mutual enmity and aggressiveness. Civilizing process softened human conduct in public places, rather than making humans more moral, friendly, and caring for others.

The modern demand of self-restraint and desisting violence to others is not therefore absolute but conditional, confined to certain kinds of behavior, certain categories of “others,” and certain sorts of milieus and situations. I’ve suggested that the two expedients allowing its suspension or cancellation are “adiaphorization” (i.e., exemption of certain conducts and certain aspects of interrelation and interaction from ethical significance and so obliquely denying their potentially violent character) and exclusion of certain categories of humans from the universe of moral obligations (i.e., explicit or implicit denial of their humanity). Adiaphorization is nowadays applied commonly in an ever widening range of interhuman relations and actions, which means a lot for the present ethical standards and moral practices of the present-day society of individualized consumers, and probably yet more for their immediate prospects.

For the issue you’ve raised, that of the degree (if any) of immunity of the contemporary society to the morbid, holocaust-enabling “products of modernity,” the second expedient is, however, particularly relevant. We are trained daily by the opinion-making media as well as political authorities to treat acts of exclusion, banishment, exile as phenomena so ordinary, frequent, and ubiquitous that for all practical intents and purposes they are no longer visible, let alone shocking and disturbing moral conscience. Media offer massively popular shows of the Big Brother or Weakest Link type in which the repetitive, routine, and scheduled séances of exclusion provide invariably the widely cherished and ratings-hoisting highlights — the main foci of interest and, indeed, entertainment. Political authorities, with rising support among their electors, set aside categories of people to whose treatment the canonical moral commandments do not apply — or apply in a severely cut down measure: terrorists, people suspected of giving them shelter and so fit for the role of the drones and artillery fire “collateral casualties,” heretics or members of wrong kind of sects, illegal immigrants, or the varying circumstantially in composition “underclass” — no longer a social problem but a problem of “asocial behavior” and therefore of “law and order.”

All in all, it’s too early for declaring the immunity about which you ask. The potentially morbid “products of modernity” are alive and well, at home as much as abroad, and — courtesy of seriously deregulated and notoriously avoiding control arms trade —permanently within reach and carrying the risk of falling in “the wrong hands.” Where modern industrial and organizational technologies meet timeless human enmities, explosions of violence and massive bloodletting are in the cards. It feels like living on a minefield: one knows that the soil is saturated with explosives and that explosions must and will happen — though one doesn’t know where and when.

Why was the notion of "postmodernity" so central to your analysis of the contemporary situation at a certain point in your writings, and why did you leave that notion behind, opting instead for "liquid modernity," the rich and suggestive concept you coined, one that has loomed large in your writings for the last 15 years and inspired a considerable amount of commentary?

It all started from an overwhelming feeling that the categories I learned as a student to deploy in the study and analysis of the current social realities (categories filed under the rubric of “modernity”) are increasingly and ever more blatantly ill fit for the task. The situation is akin to the “paradigm crisis” described by Thomas Kuhn in his eye-opening study of “scientific revolution.” The fast growing mass of “anomalies” made the paradigmatic categories less and less able to dismiss it as a collection of “freak,” “marginal,” or “abnormal” phenomena that could be ignored when composing the stories about the society that their users were called to provide. Modernity no longer looked “as we knew it and remembered,” or as we deemed it to be. The need for rethinking and rearranging the inherited wisdom grew ever more obvious and urgent. The idea of “postmodern,” already around and gaining in popularity, signaled that need and an intention to gratify it.

But as I explained it in the interview given in 2004 to Milena Yakimova for Eurozine, a website journal, the concept of the “postmodern” was but a stopgap choice, a “career report” of a search still ongoing and remote from completion. Signaling that the social world had ceased to be like that mapped using the “modernity” grid, that concept was, however, singularly uncommittal as to the features the world had acquired instead. It has done its preliminary, site-clearing job: it aroused vigilance and sent the exploration in the right direction. It could not, however, do much more, and so it soon outlived its usefulness; or, rather, it has worked itself out of the job … About the qualities of the present-day world we could and should say more than it was unlike the old and familiar one. We had matured to afford (to risk?) a positive theory of the novelty. That much I felt from the start — and the collection of essays Intimations of Postmodernity (1991) seems to me, retrospectively, to have been a manifestation of that feeling.

“Postmodern” was also flawed from the beginning on another score: all disclaimers notwithstanding, it did suggest that modernity was over. Protestations did not help much, even as strong ones as Jean-François Lyotard’s (“one cannot be modern without being first postmodern”) — let alone my own insistence that “postmodernity is modernity minus its illusions.” Nothing would help; if words mean anything, then a “postX” will always mean a state of affairs that has left the “X” behind.

I had (and still have) serious reservations towards alternative names suggested for our contemporaneity. “Late modernity”? How would we know that it is “late”? The word “late,” if legitimately used, assumes closure, the last stage. (Indeed — what else one would expect to come after “late”? Very late? Post-late?) A responsible answer to such questions may be given only once the period in question is already definitely over — as in the concepts of “late Antiquity” or “late Middle Ages” — and so it suggests much bigger mental powers than we (as sociologists, who unlike the soothsayers and clairvoyants have no tools to predict the future and must limit ourselves to taking inventories of the current trends) can responsibly claim. “Reflexive modernity”? I smelled a rat here. I suspected that in coining this term we were projecting our own, the professional thinkers’, cognitive uncertainty and caution upon the social world at large, or — perhaps inadvertently but surely unwarrantedly — present our (quite real) professional puzzlement as (imaginary) popular prudence; whereas that world out there is lived, as Thomas Hylland Eriksen memorably opined, under “tyranny of the moment” — being marked by the fading and wilting of the art of reflection. Ours is a culture of forgetting and short-termism, the two archenemies of reflection. I would perhaps embrace Georges Balandier’s surmodernité or Paul Virilio/John Armitage’s hypermodernity, were not these terms, like the term “postmodern,” too shell-like, too uncommittal to guide and target the theoretical effort.

I’ve tried to explain as clearly as I could why I have chosen the “liquid” or “fluid” as the metaphor of the present-day state of modernity — see particularly the foreword to my Liquid Modernity. I made a point there not to confuse “liquidity” or “fluidity” with “lightness” — an error firmly entrenched in our linguistic usages. What sets liquids apart from the solids is the looseness and frailty of their bonds, not their specific gravity.

Inspiration to choose liquidity for the arch metaphor of our present state was, to be sure, at hand — contained in the great physicist and Nobel Prize–winner Ilya Prigogine’s 1996 pivotal study (with its telling-it-all title) The End of Certainty: weakness of bonds between molecules accounts for one attribute that liquids possess and solids don’t. An attribute that makes liquids an apt metaphor for our times, it is the fluids’ intrinsic inability to hold their shape for long on their own. The “flow,” the defining characteristic of all liquids, means a continuous and irreversible change of mutual position of parts that due to the faintness of intermolecular bonds can be triggered by even the weakest of stresses. Fluids, according to Encyclopaedia Britannica, undergo for that reason “a continuous change in shape when subjected to stress.” Used as a metaphor of the present phase of modernity, “liquid” makes salient the brittleness, breakability, ad hoc modality of interhuman bonds. Another trait contributes to the metaphorical usefulness of liquids: their “time sensitivity,” so to speak — again contrary to the solids, which could be described as contraptions to cancel the impact of time.

Many things “flow” in a liquid-modern setting — but in most cases this is a trivial, even banal observation. After all, to say that commodities or information “flow” is almost as pleonastic as the statements “winds blow” or “rivers flow.” What is truly a novel feature of the social world and makes it sensible to call the current kind of modernity “liquid” in opposition to the other, earlier forms of the modern world, is the continuous and irreparable fluidity of things which modernity in its initial shape was bent, on the contrary, on solidifying and fixing: of human locations in the social world and interhuman bonds — and particularly the latter, since their liquidity conditions (though not determines on its own) the fluidity of the first. It is the “relationships” that are progressively elbowed out and replaced by the activity of “relating.” If one still unpacks the meaning of the word “relationship” in the pristine, still dictionary-recorded, fashion, one can only use it, as Jacques Derrida suggested, sous rature [under erasure]; or one ought at least to remember that it is, to use Ulrich Beck’s terminology, a “zombie term.”

All modernity means incessant, obsessive modernization. (It’s erroneous to think of modernity as a state, it is a process; modernity would cease being what it is the moment that modernization ground to a halt.) And all modernization consists in “disembedding,” “disencumbering,” “melting” the solids, etc. — in other words, in dismantling the received structures or at least weakening their grip. From the start, modernity set on depriving the web of human relationships of its past holding force; “disembedded” (i.e., “uprooted and set loose”) humans were, however, expected to seek new beds and dig themselves in them using their own hands and spades, even if they chose to stay in the bed of their birth. (“It is not enough to be a bourgeois,” warned Jean-Paul Sartre; “one need to live one’s life as a bourgeois.”) So what is new here?

What is relatively recent, is that while the “disembedding” goes on unabated, the prospects of “re-embedding” are nowhere in sight and unlikely to appear. In the incipient, “solid” variety of modernity, disembedding was a necessary stage on the road to the re-embedding. It had merely an instrumental value in transforming what used to be given into a task (much like the intermediary “disrobing” or “dismantling” stage in the three-partite Arnold Van Gennep/Victor Turner scheme of the rites of passage). Solids were not melted in order to stay molten, but in order to be recast in moulds up to the standard of better designed, rationally arranged society. If there ever was a “project of modernity,” it was the search for the state of perfection, a state that puts paid to all further change, having first made changes uncalled for and undesirable. All further change could only be to the worse …

This is no more the case, however. Bonds are easily entered but even easier to abandon. Much is done (and more yet is wished to be done) to prevent them from developing any holding power. Long-term commitments with no option of termination on demand are decidedly out of fashion and not what a “rational chooser” would choose … Relationships, like love portrayed by Anthony Giddens in his Modernity and Self-Identity (1991), are “confluent” — they last (or at least are expected to last) as long as both sides find them satisfactory. Let me add that because of asymmetry between starting and finishing relationships of the “confluent” kind (starting calls for two sides’ consent, for finishing a decision of one suffices), transience and uncertainty are built into the experience of both sides: there is no telling who will first resort to the exit option. And with other bonds in flux similar to that of the partnership conditions, the Lebenswelt — subjectively held vision of the world — is fluid. Or, to put it in a different idiom, the world — once a stolid and incorruptible, rule-enforcing and following umpire — has become one of the players in a game known to change its rules as it goes, and do it in an apparently whimsical and hard to predict fashion.

In the second decade of the 21st century brittleness and endemic temporality, “until-further-notice” character of bonds has become yet more salient (indeed, so obvious that rarely noticed, regarded as a problem, and prodding reflection) since it started being aided and abetted by the online alternative to the offline experience and communities became more like “networks”: assemblies produced and constantly reproduced anew through the interplay of connecting and disconnecting acts.

¤

Efrain Kristal is professor and chair of UCLA’s Department of Comparative Literature.

LARB Contributors

Arne De Boever teaches American Studies in the School of Critical Studies at the California Institute of the Arts, where he also directs the MA Aesthetics and Politics program. He is the author of States of Exception in the Contemporary Novel (2012) and Narrative Care (2013) and editor of Gilbert Simondon: Being and Technology (2012) and The Psychopathologies of Cognitive Capitalism: Vol. 1 (2013). He edits Parrhesia: A Journal of Critical Philosophy and the critical theory/philosophy section of the Los Angeles Review of Books. He is also a member of the boundary 2 collective and an Advisory Editor for the Oxford Literary Review.

Efrain Kristal is professor and chair of UCLA’s Department of Comparative Literature. He is also professor in UCLA’s Department of Spanish and Portuguese and in UCLA’s Department of French and Francophone Studies. He is author of over 90 scholarly articles and prologues, as well as the author of many books. His recent publications include “Peter Sloterdijk and literature” for Sloterdijk Now (2012); “Art and Literature in the Liquid Modern Age” for The Blackwell Companion to Comparative Literature (2011); and the entries on “Poetry and Fiction” and on “Peruvian poetry” for The Princeton Encyclopedia of Poetry and Poetics (2012). He is an associate editor of The Blackwell Encyclopedia of the Novel (2010), has edited The Cambridge Companion to the Latin American Novel (2005), and co-edited The Cambridge Companion to Mario Vargas Llosa (2012) with John King.

LARB Staff Recommendations

Disconnecting Acts: An Interview with Zygmunt Bauman Part II

Part II of a two-part interview with one of Europe’s foremost thinkers, Zygmunt Bauman.

Derrida and the Death Penalty

Jan Mieszkowski reviews The Death Penalty, which contains the first 11 sessions of Derrida’s two-year seminar on the subject.

Did you know LARB is a reader-supported nonprofit?

LARB publishes daily without a paywall as part of our mission to make rigorous, incisive, and engaging writing on every aspect of literature, culture, and the arts freely accessible to the public. Help us continue this work with your tax-deductible donation today!